00:00:00.900

Welcome to the presentation.

00:00:12.440

I'm excited to be back at RailsConf. This is my first conference since 2019, and it's really nice to see everyone here. How many of you missed this?

00:00:24.060

My name is Brad Urani, and I'm a principal engineer at Procore. I live in Austin, Texas.

00:00:31.560

At Procore, we build software for the construction industry. If you're involved in building a skyscraper, a shopping mall, or a subway system, you might be using our software. It's a large suite of enterprise tools, and our tagline is, "We build the software that builds the world."

00:00:43.020

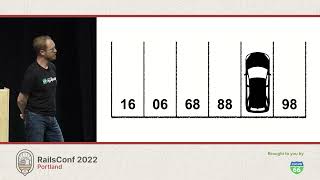

We have a strong team of around 200 to 300 Rails developers. We grew from a small shop, starting on Rails 0.9 over 16 years ago. Now, we might have one of the world's largest Rails applications. I know Shopify has a significant app, but ours is quite large too.

00:01:04.619

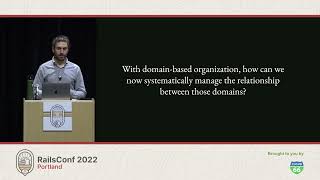

We have over 43,000 Ruby files, more than a thousand database tables, and somewhere between 250 and 300 developers working on it daily. With this growing complexity comes some challenges. While this model of a giant Rails app has served us well, it can't scale forever. At some point, we need to explore different architectures.

00:01:30.840

That raises important questions: How do we split the world's largest monolith? How does our existing architecture communicate with new services? It's not obvious. How do we implement multi-region strategies, so our AWS resources can communicate across North America and Europe? Additionally, how do we extract components like search and reporting that often cause significant performance issues?

00:01:49.320

These questions lead us to rethink our architecture. At Procore, we are addressing these problems through the development of a distributed systems strategy, which is fundamental for our service-to-service communication. Our solution allows for efficient data replication across zones and supports self-service creation of services that can publish and consume Kafka streams.

00:02:27.420

Kafka streams come in various iterations. You might hear about an event bus, a transaction log, or stream processing. At the core of this is Apache Kafka, a distributed log created by Jay Kreps at LinkedIn. While Kafka sits as the dominant option with the most integrated tools and libraries, alternatives like Amazon Kinesis and Google Cloud Pub/Sub exist.

00:03:12.060

Kafka allows for a publisher to send data to a topic, which acts as a single stream. It then enables a consumer to read from that topic. I'll delve into how we can read and write to Kafka from Ruby.

00:03:43.920

There's a Ruby gem called Ruby Kafka, maintained by the folks at Zendesk, which simplifies the process. To get started, you instantiate a Kafka client and configure it with broker names. Then you deliver a message specifying the intended topic and any applicable partitioning.

00:04:58.020

Kafka also supports asynchronous message delivery, where messages are queued in memory and processed by a background thread, allowing for non-blocking requests in your Rails application. However, it’s essential to note that this async mode lacks durability—messages could be lost if your server crashes. I'll discuss what to consider in terms of production consistency throughout this talk.

00:05:47.880

Kafka topics are divided into partitions to enhance scalability. Each consumer processes a batch of messages, ensuring order within a message subset based on a chosen partition key, such as user ID. This enables parallel processing by allowing multiple consumers to work on different partitions.

00:06:49.020

Reading from Kafka in Ruby again uses the Ruby Kafka gem, configuring connection settings like brokers. This involves a continuous loop subscribing to a topic and processing batches of messages. Managing exceptions in this part is critical for maintaining order guarantees.

00:07:24.060

You can make any Rails app a Kafka consumer by creating a class that initializes your Rails autoloaders, thereby using your existing model classes, skipping over the web server layer.

00:08:00.840

Kafka offers some unique guarantees compared to other messaging systems. It ensures at least once delivery, meaning that if a consumer fails to process a message initially, it will retry until successful. This is crucial for retaining the integrity of messages.

00:08:52.920

Furthermore, Kafka preserves a guaranteed order of messages within a partition, which is vital for consistent data flow. With this, we can effectively utilize a Rails app writing to a database while making sure downstream processes maintain the same updated data.

00:09:47.700

Multicasting allows multiple consumers to listen to a single topic, thus enhancing flexibility in the system. Kafka's retention mechanism permits re-consumption of messages, as long as they are retained according to a set retention schedule.

00:10:38.760

If you're looking to implement Kafka, various options exist, such as AWS's managed service MSK, Heroku, and Confluent, which offers extensive tooling for Kafka implementations.

00:11:35.880

The classic question that emerges is: What is the best way for one Rails app to communicate with another? While an HTTP POST could seem simple, it presents faults when dealing with errors, such as unavailability of the downstream service. The latency involved with synchronous communication is also something to consider.

00:12:36.300

In contrast, Kafka allows for asynchronous interactions, enabling quick write operations without waiting for downstream acknowledgment.

00:13:23.040

However, one must consider that Kafka messages can be retried, leading to potential duplicates. Therefore, implementing idempotent design in your consumer logic is necessary to avoid duplicate inserts.

00:13:59.100

Let’s delve into some use cases. The first use case illustrates service-to-service communication upon user registration. With Kafka, various actions such as sending welcome emails, adding search records, and others can occur independently, benefitting from the retry mechanism.

00:14:48.360

For scenarios requiring heterogeneity, Kafka ensures data consistency across different storage solutions. If a Rails app writes to Kafka, data flows consistently into different systems, maintaining the order.

00:15:24.600

Another concept to explore is Kafka Connect, which facilitates simpler production and consumption of data without the need to build consumer and producer code from scratch.

00:16:00.300

Companies increasingly recognize the value of having a data lake, where Kafka can back up topics to cloud storage, making it easier to retrieve historical data.

00:17:00.840

Use cases for data replay involve reprocessing periods like the entirety of previous years, facilitating better decision-making in machine learning models and indexing.

00:18:06.420

The concept of event sourcing decouples database writes from the application logic, pushing writes first to Kafka and then processing to databases, thus ensuring integrity.

00:18:54.720

This pattern promotes consistency, especially when dealing with complex applications involving multiple states. It also allows separate management of read and write models, aiding in efficiency.

00:19:55.140

Region-to-region replication is another pivotal use case, facilitating the sharing of records such as customer details across geographical boundaries while maintaining consistency.

00:20:55.080

Methods like Kafka Connect can help replicate topics across regions using built-in tools like Mirror Maker, enhancing service availability.

00:21:48.420

A critical consideration is dual writes when synchronously writing to Kafka and a database. This leads to inconsistencies, and leveraging Change Data Capture via tools like Debezium helps with this.

00:22:48.540

By tracking database transaction logs and coordinating them with Kafka, we can maintain data integrity and prevent dual write issues.

00:23:55.740

Transactional outbox patterns also assist in decoupling streams from database writes, ensuring all processes are treated as a single transaction.

00:24:18.840

Publishing methods can vary. Whether you're looking for synchronous safety or asynchronous speed, ensure the choice fits the needs of your application.

00:25:13.560

Summarily, streaming provides a globally distributed, eventually consistent means to asynchronously send messages across services in a reliable and swift manner.

00:26:18.600

Within a message are headers, a partition key, and data. Choosing a serialization format impacts your efficiency, so explore options like Avro or Protocol Buffers compared to JSON.

00:27:20.760

Additionally, tools like Apache Flink are on the horizon, offering real-time stream processing capabilities, allowing for enhanced message handling.

00:28:15.120

Thank you for listening. I’m Brad Urani from Procore, and I appreciate your time. Although I have run out of time for questions, feel free to approach me afterward.