Talks

Five Sharding Data Models and Which is Right

#database-sharding

#postgresql

#data-modeling

#multitenancy

#distributed-systems

#performance

#rails-at-scale

Summarized using AI

Five Sharding Data Models and Which is Right

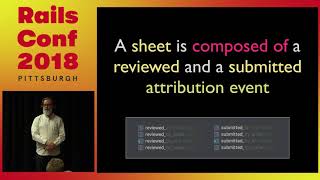

by Craig KerstiensIn this session at RailsConf 2018, Craig Kerstiens from Citus Data addresses the challenging yet essential topic of sharding in databases, particularly using the Postgres system. Sharding, defined as the practice of breaking a database into smaller parts to enhance performance, is crucial for scaling applications as seen in major platforms like Google and Instagram. Craig explores five different sharding data models, emphasizing the importance of proper data modeling for successful sharding. Key points include:

- Understanding Sharding: Sharding allows for better performance through the distribution of data, enhancing write and read capabilities.

- Key Considerations: It’s vital to define the right shard count upfront, favoring a higher number of shards to manage growth and prevent future migration complications.

- Five Data Models: The models discussed include:

- Hash-based Sharding: Uses a hash function on IDs to evenly distribute data across shards, improving access times and minimizing data skews.

- Range-based Sharding: Efficient for time series data, where data is segmented by preset ranges (e.g., daily, weekly) for better management.

- Geographical Sharding: Applicable if clear geographic boundaries exist, though caution is advised with data that spans these boundaries.

- Multi-Tenant Sharding: Ideal for SaaS applications where each customer's data is kept isolated, ensuring privacy and performance.

- Hierarchical Sharding: Optimizes queries for parallel processing; suitable for applications with extensive data processing needs.

- Practical Recommendations: Craig advises on the importance of planning shard distribution appropriately and maintaining a robust structure in the initial design phase to avoid complications in scaling.

- Conclusion: The talk stresses that with the right approaches and early groundwork, sharding can be a manageable and essential tactic for scaling database applications effectively, paving the way for future growth without the constant fear of hitting limits.

Overall, this session provides invaluable insights for developers and database administrators looking to implement sharding strategies successfully in their applications.

00:00:12.210

It's time. I think we'll go ahead and get rolling. Hi everyone, I'm Craig. I work at Citus Data, and if you're not familiar, we turn Postgres into a sharded and distributed database.

00:00:23.460

I won't talk too much about Citus in this talk, but we have a booth in the expo hall. So if you're curious, I'm happy to answer questions about Citus afterwards.

00:00:30.210

Generally, I'm going to talk about sharding and data modeling. There are a lot of mixed opinions on sharding, but it's pretty well proven that it scales. How you model your data upfront is probably the single biggest factor impacting your success or failure with it.

00:00:42.450

First, a little bit of background on who I am. I curate Postgres Weekly, so if you're a Postgres fan, is anyone here not using Postgres or not a fan? A couple of hands? Okay, I'll convince you afterwards.

00:00:56.370

But I curate Postgres Weekly, which is a newsletter similar to Ruby Weekly and other weekly newsletters, targeted more towards active developers than DBAs.

00:01:09.659

I run our database as a service at Citus, and previously, I was at Heroku, running Heroku Postgres for about five years, so I'm happy to answer questions on that as well.

00:01:15.299

So what is sharding? There's been quite a bit of talk this week, I think. Like DHH was kind of proud of how they didn't shard, yet they split things into smaller pieces by using different databases.

00:01:26.850

Rails 6 is getting some support for additional databases, but it's not necessarily sharding; it's just multiple databases that Rails is aware of, and you get to figure out what to do with them.

00:01:39.240

Sharding, by definition according to Wikipedia, is the practice of breaking your database into smaller parts, usually for performance. This could be for write performance or read performance, your pick. But it’s usually about performance. The nice thing about sharding is that breaking your database up from one huge database into a bunch of smaller parts makes everything smaller and faster.

00:01:56.610

With sharding, things like vacuuming and indexing are all faster, so everything is small and easy to work with again.

00:02:07.560

It's very well proven at scale; Google, Instagram, Salesforce, take your pick. Any large company of scale has gone down this path. You don't necessarily have to be at their scale to find the benefits.

00:02:20.910

I've seen sharding start to make sense at as early as 10 gigs of data, though more commonly, it's at 100 gigs or a terabyte.

00:02:32.880

First, what is a shard? I think this is the most common misconception when talking to people about this.

00:02:38.910

We'll get into data modeling in a little bit, but first, what is actually a shard and how should you approach it? There’s like a physical VM, an instance on Amazon, or a Postgres cluster.

00:02:50.760

A lot of times people think VMs are these shards. They’re actually not. The idea of a shard is that you're going to have multiple shards existing within one VM.

00:03:03.410

There's a separation between nodes and running multiple Postgres instances on it. So if you've got a Postgres database with multiple tables or schemas, all those are technically shards.

00:03:10.230

This is pretty important early on, so that you don't tie yourself to the exact hardware. The idea with sharding is that you want to create a bunch of smaller chunks that you can easily move around.

00:03:20.880

You can move them between different nodes, scale the hardware up, scale it down, add nodes, and move the hardware and tables across. This makes your life a lot easier.

00:03:32.730

Starting with defining the right shard count upfront is pretty key. To visualize it, if I've got two different physical instances and I want to create 32 shards, underneath, I might create a table like events.

00:03:40.860

I'm going to call them events_1, events_2, events_3, up to 32. I'm going to create a bunch of shards on one server first, and if I'm using tables, create odd numbered events on one node.

00:03:50.760

On the other node, I'll place the even-numbered events. It’s really simple, but what's nice is that when I transition from 2 nodes to 4 nodes, I can just take half of those shards and move them.

00:04:10.230

You can do this using logical replication, create a read replica, copy them over, and then update the metadata. Overall, it’s more manageable than if you’ve got sharding per node.

00:04:28.169

Splitting that up inside the same table is a lot harder, so upfront, you're going to want to create a larger number of shards.

00:04:41.400

Onto the five data models we're going to walk through.

00:04:47.370

Here's the things to think about, tips, ways to rationalize through it, and ways to rule it out. The number one thing I try to do is rule out a data model by saying, 'This won't work,' and find reasons why instead of forcing it in.

00:05:05.849

When you force it in, you've likely had a bad sharding experience. If you've ever tried sharding and it just failed miserably, you probably tried to force a square block into a round peg.

00:05:13.080

So a couple of bits on the approaches... Most people, when they think of sharding, think of two different approaches. One of them is much more common at scale, while the other is one that gets talked about more.

00:05:29.510

The first approach is hash sharding. As soon as something comes in, you hash that ID. In this case, if we have 32 different shards, we split the resulting hash value into 32 different buckets, keeping a metadata table that says this resulting value goes into this shard.

00:05:41.449

Any questions on that? It’s a bit more than that, but at a basic level, you're going to have space for some ID.

00:06:01.589

If you're using Postgres, there's an internal hashing function available for all data types. You can use it for text and integers or even roll your own.

00:06:17.040

The hashing function you choose will not determine the success or failure. What you want to do is define your shards upfront, going larger than you expect.

00:06:32.660

If you think, 'At our peak, when we're at Google scale, we’ll run a thousand servers,' that's probably a good number. A thousand shards or two thousand sounds reasonable.

00:06:48.770

You also probably don't want to stick with two. You'll outgrow two nodes incredibly quickly. Some median-based number, based on how large you think you're going to grow, is a good start.

00:07:06.599

You can split up shards later, but that's a lot more work. Once you go through the sharding process, you want to set it up for two, three, or even five years to avoid having to redo a bunch of work.

00:07:23.789

Again, two is bad, and two million is also bad. With tables too small, they're empty, and running a migration on them now costs you in production.

00:07:40.539

I prefer higher numbers like 128 or 256. You don't have to grow by factors of two, but it usually works pretty well.

00:07:53.750

A common misconception is that people just route the values. You have user ID 1 and say, 'I'm going to take users 1 through 10 and put them in shard number 1.' Then have shard number 2 with users 2 through 20.

00:08:06.330

This is a huge problem since your oldest customers have the most data, and they end up all being saturated together. By hashing things upfront, you distribute your data more evenly.

00:08:17.460

You might still have a hotspot, but it’s a better way of ensuring natural data distribution upfront.

00:08:31.500

Take user ID 1, compute its hash in Postgres to get 46,000. User ID 2 computes to 27,000. I will have a table split for the range of hash values, so between 26,000 and 28,000, I put those in shard number thirteen.

00:08:44.100

I maintain a lookup table in my application or in my database metadata. If you're using Citus, you want to think about this; it's built-in for you.

00:09:00.780

It’s the same idea: all the resulting hash values will live in this shard, evenly distributing data. As things grow, new ones redistribute, so it works out nicely.

00:09:12.930

Now onto range partitioning. I've seen a lot more people implement this than true hash partitioning. It's common in time series.

00:09:29.820

When using range partitioning, ensure you create a new bucket. For instance, if you’re running an ad network and counting impressions by week,

00:09:43.680

you create a new table for every week of data that comes in. Make sure you keep creating those new ranges, and you'll be set.

00:09:55.080

Range partitioning becomes straightforward; you just create new tables for each time period.

00:10:06.330

Questions? There’s quite a bit more to cover; those basic approaches apply pretty broadly to sharding.

00:10:18.840

The more interesting part is the data model. If you don't get the data model right, you're going to have a lot of problems joining data across different nodes.

00:10:27.760

Moving data across the wire is still slow; we haven't fixed that in databases. So how can you model your database on your application?

00:10:41.610

First, let’s discuss geography. The big question here is, is there a really clear geographical boundary? Usually, 99% of the time, you don't want to do this.

00:10:54.509

There's always an exception, like when I was getting picked up at SFO Airport one time. It was the day before Thanksgiving; the passenger said, 'I can't go see those people.' So he drove from San Francisco to LA.

00:11:06.680

Normally, you think of Ubers having confined regional boundaries, so most drivers won’t cross large distances. This usually works well, like with services that have defined geographical boundaries.

00:11:21.340

However, bad examples arise when one side has geography while the other side does not. For example, text messages can go from one area code to another.

00:11:34.010

I grew up in an age where I kept my cell phone number, so I might have an Alabama number in California. I don’t pay attention to area codes anymore, which can span geographic boundaries.

00:11:47.520

The key issue here is that joins across geographic boundaries slow things down, and you should find out how often you join across those boundaries.

00:12:01.260

For something like Instacart, moving users’ grocery stores when they change addresses is generally okay because it's a rare operation.

00:12:14.640

You want to balance granular versus broad approaches. For example, state-level data may not work because states vary in size significantly.

00:12:29.700

Zip codes might be better for data distribution. Common use cases for this include Instacart and Uber.

00:12:39.570

If you're in a market starting in one geography and then expanding, this model could work well for you.

00:12:49.920

However, I see some issues with range sharding. For example, California gets one database, Texas another, which becomes problematic.

00:13:02.940

This leads to challenges with managing 50 different databases; you miss the proper hashing work that should have been done.

00:13:15.750

For instance, you should still hash the IDs. Give states an ID or hash their name to create manageable portions.

00:13:27.750

Multi-tenancy is my favorite model as it's typically the easiest. If you have a SaaS B2B business, each customer's data is distinct; companies like Salesforce make sure customers see only their data.

00:13:41.610

It's crucial to understand your data distribution. If one tenant holds half your data, it's problematic. A 10% share is manageable, but deep evaluations here are key for success.

00:14:05.500

Common use cases include CRM and marketing automation SaaS, where customers' data is distinct.

00:14:24.210

Start early by adding a tenant ID or customer ID to every table. This eases joining tables and sets things up nicely down the line.

00:14:37.740

Ensure your primary and foreign keys are composite. Rails supports this reasonably well, and this approach will show performance gains.

00:14:50.120

By enforcing an ID on all queries—ensuring a WHERE clause checks customer ID—this prevents data leakage. There are gems like Active Record Multi-Tenant that help enforce this.

00:15:03.720

To illustrate, in a Salesforce schema with leads, accounts, and opportunities, you’ll add an org ID to all tables. Ensure your primary key combines both identifiers.

00:15:15.960

Starting this early saves headaches later, preventing complications when backfilling large tables.

00:15:29.620

Also, watch out for anomalies in your data. I've seen situations where duplicates occur across records, leading to confusion on which is correct.

00:15:45.500

A word of caution: if you choose a schema-per-customer approach, take care. It’s great for ten customers, okay for a hundred, but could be brutal at a thousand.

00:16:01.619

You may face long migration times, as operations on thousands of schemas can take hours. Connection limits become a limiting factor too.

00:16:16.830

If you're only planning for ten customers, this approach is valid, but consider future scalability.

00:16:29.240

Now, let’s discuss optimizing your workload. You aim for parallel queries across multiple shards. You should optimize for performance and parallelism, particularly in use cases like ad networks.

00:16:48.139

For an example like web analytics, it’s common to separate by visitor ID or session ID. Most web analytics tools focus on aggregations, which fare well.

00:17:03.929

Some points to consider: SQL capabilities are limited in sharding; window functions are cumbersome as they require aggregation back to a single node.

00:17:20.530

Adopting a Hadoop MapReduce mindset allows for running jobs in parallel within nodes. This has proven to show significant performance gains.

00:17:41.500

Examples include count stars across shards, summing data, and computing averages efficiently. But median calculations may get tricky.

00:18:01.710

Now let’s highlight distinct counts, utilizing algorithms like HyperLogLog. It provides approximate distinct counts efficiently.

00:18:18.630

Ordered lists also have use cases, like finding top K values using tools such as Elasticsearch.

00:18:30.170

Graph databases are suitable for scenarios with numerous connections, such as social networking and fraud detection.

00:18:43.580

In these databases, you'll assess connections between posts and user interactions. The most common approach involves writing data twice.

00:18:55.540

Querying based on associations or object properties depends on your goals. Neo4j is another popular option for managing graph databases.

00:19:10.170

Time series data often revolves around time dimension as well. However, having a timestamp alone isn't enough to designate it as a time series.

00:19:20.110

You need to assess if you’re frequently querying across time ranges or pruning old data. These factors determine if time series is a fit.

00:19:35.470

You want to leverage range partitioning when sharding. Define ranges upfront and keep up with partitions to prevent bottlenecks later.

00:19:48.710

Updating your partitions and ensuring old data is deleted keeps performance optimal. If you relied solely on automation in the past, you’ll need to ensure that it continues.

00:20:06.360

When using native partitioning in PostgreSQL, use extensions like PG Partman to manage this more effectively.

00:20:18.199

Ultimately, sharding has evolved and is more manageable than before. If you identify and implement best practices early on like adding tenant IDs, you save a lot of future work.

00:20:34.170

It’s essential to select the right approaches and be open to ruling out models that won’t work for you.

00:20:45.390

Sharding was challenging in the past, but improved tools and libraries now facilitate this process, making it much easier than it once was.

00:20:58.630

Though it’s never a trivial task, properly implemented sharding scales and mitigates future worries of data handling.

00:21:15.030

Alright, I think that's it, and I might actually have a couple of minutes for questions.

00:21:28.650

You.

Explore all talks recorded at RailsConf 2018

+98