00:00:12.139

Like many developers, I love working on code performance, getting deep into a problem, measuring, profiling, tweaking, and squeezing out every last drop of truth from my code. I'm a Rails back-end developer, but I don't get to do that very often. Last year, I worked on a project where performance was key, and working on that project taught me some interesting lessons about assumptions.

00:00:37.079

First of all, hi! My name is Daniel, and I work for Go-Catalyst, a payments company based in London, UK. As you probably noticed, I’m not originally from London; I come from Argentina, so just in case you're wondering, that's the accent. Now, before I get into the interesting part of this talk, I need to give you a little background on the problem we were trying to solve.

00:01:06.600

Go-Catalyst is a bank-to-bank payments company, which basically means we serve as an API on top of the banking system. For a payments company, your customers expect you to always be available, just like they expect the electricity to always work. Therefore, for us, uptime is critical, and that means, among other things, that having good metrics for our apps is essential.

00:01:29.600

This is where our project came in. One of the main tools we use for this is a metric server called Prometheus. Now, to clarify, it's not just Prometheus; we had a significant issue trying to use it well. Prometheus basically maintains a history of all of your metrics over time, and to get those numbers, it periodically hits your app asking for them.

00:01:54.720

In order to provide metrics, your web server, which is running your app, also needs to keep a count of various things, such as how many HTTP requests it served, how long they took, or how many payments were processed in different currencies. Prometheus will reach out to your app every few seconds for these metrics, and you'll need to reply with an output that looks somewhat like this.

00:02:18.450

Prometheus has libraries that help you accomplish this. These libraries allow you to declare your counters and set their values, and when scraped, they automatically generate the needed output. This is available for all major server languages, including Ruby.

00:02:35.330

However, in the Ruby ecosystem—and also in Python—the situation is a little trickier due to the Global Interpreter Lock (GIL), which prevents the execution of code in multiple threads simultaneously. Because of this, our web servers are typically not multi-threaded, as that doesn't scale effectively. Instead, in Ruby, we often use separate processes. Processes do not share memory, which effectively solves the same problem that the GIL tries to address.

00:03:06.340

In the web world, we call this a prefork server, and it's how Unicorn works. When Unicorn starts, it loads your app and then forks itself into a master orchestrator process that listens for requests, alongside a bunch of workers that actually handle those requests. When a request comes in, the master process selects a worker to handle it and returns the response to the client. This system works great, scales well across cores, and is how most Ruby web apps run in production.

00:03:32.260

However, for Prometheus’s use case, this presents a problem. Now that we have multiple processes that don’t share memory, each process maintains its own internal counters. When Prometheus hits your application and requests all of your metrics, each worker responsible for handling that request will report its own metrics, which is not what you want. You need an aggregate view of the metrics across the entire system.

00:04:02.890

Yet, because the processes don’t share memory—an intentional design choice—there’s no easy way for one process to access the numbers from another and combine them. This lack of visibility is the primary reason we use multiple processes; we want that isolation. Unfortunately, it becomes an issue for Prometheus, as the majority of Ruby web applications run in prefork servers.

00:04:50.080

Since the Prometheus library also lacks a solution for cross-process aggregation, many people using Ruby in production with Prometheus metrics face this very same challenge. We, too, encountered this issue while using Prometheus for our primary monolith written in Ruby. It wasn't just us; this has been a common problem for many people for some time.

00:05:12.590

Moreover, this Ruby library faced a number of other issues. It was one of the first libraries created, developed before the community established best practices for handling metrics, leading to various complications. There were some workarounds; for instance, GitLab had an official library with a multi-process solution, but it wasn’t getting merged back into the main repository and carried over all of the same issues regarding best practices.

00:05:50.720

We certainly didn’t want to use that either, nor did we want to create another fork. Instead, we communicated with the library maintainer, reached an agreement on a plan, and decided we would rewrite it while addressing all of the existing problems. However, there was one more aspect to consider: the maintainer strongly cared about performance.

00:06:18.950

The reasoning was that instrumentation should not adversely affect your app’s performance. If you're using counters and need to increment one in a tight loop, you shouldn’t even notice the overhead being added. Deep within a lengthy discussion from a 2015 ticket, I found an interesting target. While I’ve changed names to protect the innocent, one of the lead maintainers stated that incrementing a counter should take less than a microsecond.

00:06:52.220

I don't know why one microsecond was chosen as the target, but if I wanted to get our solution merged, we would really need to meet that target. Another maintainer commented that achieving this in Ruby was unlikely. This exchange led to one of my favorite responses, which basically implied that 'surely Ruby can't be that slow',

00:07:18.810

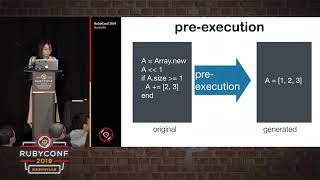

So we had to solve this problem swiftly. We had a clear performance target, and there was one more thing that the maintainer asked us to agree on regarding the library: we had these metrics classes that were employed to declare counters. Currently, they stored values in instance variables, but we wanted to change this to allow those values to be stored elsewhere.

00:07:44.080

We envisioned that these 'stores' should be interchangeable, which meant that we could go from a design like this to something a bit more flexible and modular. The reasoning behind this is that different projects have various requirements; some might run in a single-threaded, single-process environment and care deeply about performance, while others could have a web app running in multiple processes and hitting a database over the network. At that point, microsecond performance may not be a concern.

00:08:01.480

Having interchangeable stores not only provided better options for our users but also simplified our development process. The challenge with our project was sharing metrics across processes. With these interchangeable stores, it became easy to conduct small experiments, gather performance data, and measure the results without the need to modify the rest of the codebase, which was a very effective strategy for experimentation.

00:08:40.310

So, to recap, we knew what we had to do: we were going to rewrite this gem to support prefork servers, providing obstructed storage for metrics, following the current best practices, all while keeping it fast. The next step was to figure out how to do this in a way that allowed us to share those metrics while remaining within that performance budget.

00:09:17.430

Now, to store our numbers, we decided to use hashes instead of integers for reasons that will become clearer later. What this means is that when I say 'increment the counter,' it effectively involves reading and writing a value within a hash. My initial assumption was that doing this could or couldn’t be achieved in a microsecond.

00:09:52.210

If you had asked me whether this was feasible before embarking on this project, I would have firmly believed it was impossible due to my lack of intuition for performance at this low level. One individual claimed Ruby was slow, while another insisted that writing to hashes had to be faster than one microsecond, creating a divergence in opinions regarding Ruby’s performance.

00:10:29.710

The scale of this issue felt out of my depth compared to my usual experience in Ruby, where the smallest measurable unit of time I typically consider is a millisecond. As a web programmer, this is how I perceive the world: anything smaller than a millisecond seems insignificant. For instance, running a web app, a request comes in, and a few milliseconds are consumed by processes like Unicorn, Rack, and Rails.

00:11:10.890

Before it even reaches my code, I've lost time—like loading a user ID from the session, which takes about a millisecond—so the overall experience adds up. In a normal day as a Rails developer, I might see response times in the range of 60 to 70 milliseconds, and this is my daily life when performance monitoring.

00:11:44.239

But this perspective changes drastically depending on your field; for example, game developers operate within a strict 16-millisecond timeframe to deliver smooth gameplay at 60 frames per second. If they miss that frame rate, the game experiences lag, and frustrated gamers can quickly take to social media to voice their displeasure.

00:12:18.650

This raises the question: what is fast or slow is very subjective and relative to your specific context. Some developers may be looking to optimize memory access to minimize nanoseconds spent per operation, while I'm often concerned with the millisecond scale.

00:12:44.309

It’s crucial to understand that performance discussions can sometimes lack context. Things that seem fast to a Ruby developer might be viewed very differently by someone writing kernel drivers. When discussing speed, we must recognize that perspectives differ widely.

00:13:18.450

Returning to our original question: can we achieve incrementing a hash in a microsecond? As I've mentioned, my world largely exists in milliseconds, so my instincts were limited in their ability to assess this problem. Yet, I was presented with two distinct opinions that could both, in theory, be valid, so I had to measure and find out.

00:13:57.130

The first hurdle was that measuring this increment isn't straightforward. The standard approach is to use benchmarks, running the same operation multiple times to assess speed. But when the operation is so tiny, even the measurement process becomes a significant portion of the total time spent.

00:14:26.340

The method still works for comparative measurements, but it poses challenges when trying to assess the time taken for a very small operation. For instance, on my laptop, my measurements showed about one-half microsecond for incrementing a hash, which sounds impressive—but it's misleading. Each increment requires running a block, which, although fast in Ruby, still consumes a substantial fraction of total time.

00:14:51.799

If I modify the approach to avoid using a block, I can achieve a doubling in speed, which brings me closer to the actual performance of incrementing a hash. To get even more precise, I could strip the timing process itself from the final count, allowing me to identify how much time is wasted in the benchmarking process.

00:15:25.270

This reveals I can perform about 12 increments in a single microsecond—all well within budget. Interestingly, this seems to confirm that the initial opinion about Ruby's speed was valid after all: performing this operation is feasible in under a microsecond.

00:15:58.990

However, as I dive deeper into the specifics, I realize that it’s not all straightforward. I'm talking about storing these metrics in hashes. Why hashes instead of simple integers? The value of metrics is that we need much more granularity than a mere count; for example, when concerned with payments, we want to capture how many transactions occurred within various currencies.

00:16:27.780

Hence, we incorporate the concept of labels, or key-value pairs. Our internal implementation stores all numbers in a hash where the key itself is also a hash. The implications for performance are significant. When accessing a hash with a constant key, the operation is swift, but when your key is another hash, it entails additional comparisons that can dramatically slow down operations.

00:16:56.430

For example, if we have three labels in a metric, this can end up slowing things down by roughly 60 times, which poses a serious issue for us. In an ideal scenario, if most metrics lack labels, trying to use an empty hash as a key brings our incrementing operation closer to one microsecond.

00:17:27.060

However, typically, we need to validate those labels and process them for safety, which adds overhead. By implementing a mutex for thread safety, our total time creeps up to about one and a half microseconds, and we miss the target altogether.

00:17:57.590

This leads to the conclusion that the second maintainer's assertion about the microsecond target was accurate. Nevertheless, the most intriguing insight I gleaned is that I initially expected a simple response to the question but found no clear winner in this scenario.

00:18:24.220

When I say fast or slow, it’s essential to understand these terms will always be relative to the context within which they are considered. Ultimately, while we could not achieve the target of maintaining microsecond increments in a multi-process environment, we opted instead to maintain the essence of our goals — that instrumentation should be unobtrusive.

00:18:51.280

This became a vital objective for us as it would impact numerous projects across various companies, so it was worthwhile to invest time in optimizing performance, even if meeting the microsecond target was unrealistic. At this point, we established a baseline for performance and a clean, refactored codebase that facilitated rapid experimentation.

00:19:32.050

We began exploring different strategies extensively. First up, we looked into Buckets, which utilizes a rare data structure called an AP store. Essentially, it's a hash backed by a file on disk. Every time you write a value, the whole data structure gets serialized and stored, while reading requires access to the file, but this approach allows multiple processes to interact safely.

00:20:08.420

Unfortunately, while P stores operate similarly to hashes by allowing batch transactions to avoid hitting the disk for each individual operation, we quickly dismissed them as they weren't fast enough for our application needs. On the other hand, when I worked at Go-Catalyst, we had a clever idea.

00:20:38.320

Why not use Redis as a shared memory chunk between different processes? I was excited at the prospect because I knew it could offer the kind of solution we were searching for, and it had worked for similar use cases in other languages. However, when I executed some benchmarks, I was left feeling disappointed.

00:21:09.410

My expectations were dashed upon discovering that Redis was actually slower than marshaling an entire hash to disk. I was disheartened because I initially believed that the speed advantages of using Redis would hold true against the alternatives.

00:21:35.310

But when I reflected more on the dynamics at play, it made sense. Communicating with Redis over localhost involves network packets, which introduces latency—this communication process effectively led to higher counts of operational time than expected, especially against simple, in-memory operations.

00:22:10.030

I then switched my attention to the elephant in the room—memory maps. The consensus among experts and many implementations in the Python library suggested that using memory maps would solve our cross-process communication challenges. However, I was hesitant. My fear was rooted in my lack of familiarity with memory maps.

00:22:38.590

Before this project, I had never even encountered them. The documentation around memory mappings is often confusing, and while it has detailed descriptions of various flags, it lacks context on their applications for shared memory between processes.

00:23:06.046

Additionally, I discovered that accessing memory maps typically involved using file paths, which felt strange to me. Memory maps, serving as the fundamental building blocks of system kernels, act like Swiss Army knives, capable of numerous tasks but lacking straightforward examples relevant to our goals.

00:23:34.569

The method we needed was a shared-memory mapped file. What happens is that when a process opens a file and maps it, reading that memory will return the contents, and if you modify memory, that change reflects in the file. If another process maps the same file, they both access the same memory page, which is the core technique of rapid inter-process communication.

00:24:00.496

Yet, directly using memory maps from Ruby posed challenges—primarily because it had to be interfaced through C code. For someone with my limited proficiency in C, this was intimidating. Fortunately, we had the existing MMap gem, created by a developer known for successfully handling intricacies in Ruby.

00:24:32.140

The way this works is simple: you create a file, initialize it to a certain size; say, one megabyte, and then set up the mapping function to create an M variable, which behaves much like a byte array, although not entirely like a Ruby array. It enables you to read and write bytes but requires a deeper understanding of how to pack and unpack data.

00:25:02.680

If you've never used these methods, it’s best not to look them up. Ignorance is bliss in this scenario. However, if you must, when we need to write a float into memory, we pack it into eight bytes and specify where to place them within the mapped array.

00:25:40.990

At that point, we further explored how fast these stores would be. As a brief recap, we had developed a hash without synchronization, which had clocked in under a microsecond. We created a similar version for multi-threaded applications, observing performance around one and a half microseconds, and finally identified the PStore—the only workable solution thus far for multi-process interactions at a disappointing 35 microseconds.

00:26:07.420

However, we found our MMap approach was remarkably efficient. Testing each segment reinforced our observations, so we developed a script to apply stress testing in diverse scenarios. We subjected components to excessive loads to ensure stability, running complex metric trees from a plethora of threads and processes.

00:26:41.640

But upon executing this script, I encountered a segmentation fault—an unanticipated situation in Ruby, especially in high-level programming, where this doesn’t happen often. It was related to the memory being freed prematurely, which put me straight back at the root of my initial hesitancy toward memory maps.

00:27:15.195

The issue seemed tied to my code trying to resize memory maps if my original one-megabyte size was insufficient. If done incorrectly, this would lead to segmentation faults appearing well after the fact, indicating potential garbage collector issues.

00:27:49.090

Primarily, I had to isolate the root causes of these errors—something made even more challenging by the gem’s obscurity within the Ruby community. My experiments led me to isolate a common problematic sequence of events associated with resizing.

00:28:20.210

I honed my experiments, performing what felt like an elaborate dance of touch commands to flush, close, reopen, resize, remap, and only then, perhaps, avoid the dreaded segfault. Yet, despite a temporary win in overcoming the segfaults, I faced critical complications surrounding C dependency.

00:28:48.200

C extensions can be quite troublesome to manage and often lead to issues across environments, such as JRuby, where they aren’t supported, presenting ongoing installation problems similar to what many developers have experienced with other C-language integration gems.

00:29:18.470

Despite being relatively fast and unproblematic to a certain extent, challenges persisted. At that point, I found myself challenged with yet another project. The concept was simple: what if we discarded the MMap concept entirely and reverted to writing files directly?

00:29:59.510

The transition entailed minimal change from our coding perspective, simply inserting file functions to navigate and write while bypassing the more complex MMap functionality altogether. The efficiency gain resided in forgoing cumbersome marshaling tasks over the whole hash, instead opting for streamlined byte write operations.

00:30:31.660

To gauge how slow this new solution would be against disk drives, I expected significant performance disadvantages given the common understanding of disk speeds. Yet astonishingly, I discovered that writing to the disk presented healthier numbers, approximately six microseconds versus the MMap alternative.

00:31:06.970

This revelation blew my mind. Practically, I found myself presented with a compelling solution devoid of the risks that extending functionality through a C extension might introduce. With no segfault fears or compatibility issues present, it stood to be an optimal choice.

00:31:42.170

Consequently, we opted for disk as our solution. Although not necessarily the fastest, it proved to be effectively fast enough and far more reassuring than the alternatives we’d examined. Ultimately, we successfully resolved our performance problem and had our code merged into the existing library.

00:32:15.300

Now, the maintenance of this gem feels rewarding, as it accommodates Prometheus usage in Ruby applications. Curiously though, this represents an ironic twist—traditional programming wisdom touts the expectations of memory being orders of magnitude faster than disk, yet in this case, the reality fits a different narrative.

00:32:50.470

As I noted earlier, it's often necessary to recognize how context heavily influences our interpretations. Writing to memory should ideally take nanoseconds instead of microseconds, although this complexity emerges from the involved mechanics of the MMap gem itself.

00:33:27.100

Early observations might provoke skepticism toward my findings regarding write performance against traditional structures, and rightly so. What I discovered was somewhat misleading: writing configurations don’t inherently translate directly to disk commits under typical operating systems, emphasizing asynchronous write handling methods.

00:34:06.290

When you request a write operation, the OS simply recognizes the request and notes it to be done later, reducing immediate performance considerations. Since I only store metrics during operational runs without needing prior data once the app terminates, I sidestep these heavy write costs, permitting me to optimize performance without too much overhead.

00:34:41.600

In this peculiar incident, we certified that the widely accepted beliefs regarding speed do come with their undeniable nuances. The point of this talk is to convey an essential lesson regarding performance optimizations: it's crucial to measure before optimizing, as what seems slow may not be what impacts performance at all.

00:35:15.230

During my investigation of the performance bottlenecks within my code, I discovered some surprising places where my assumptions previously guided my thinking but led me to fast conclusions.

00:35:43.140

Conversely, my insights gathered from this project indicate that it extends further than previously predicted. Such revelations underscore that assumptions can dictate our paths irrationally, isolating some methods purely due to their perceived slowness.

00:36:15.120

In evaluating our solutions to performance challenges, we overlooked disk usage out of a collective preconception that it would be too slow. This paradigm shift came to light only through experimentation, which ultimately reassured both my coding instincts and our application processes.

00:36:49.330

So as I conclude, be cautious of the biases that filter out potential solutions. We must remain open to ideas we may initially assume won’t work. When exploring any new problem, pause to assess these preconceptions—ask why they exist, whether they hold merit, and, most importantly, carry out experiments to measure your thoughts.

00:37:20.760

In the end, there could be clear and ascertainable solutions that lie quietly in the background, waiting for you to recognize their potential. Thank you for listening.