00:00:17.039

This is the last session before happy hour. I appreciate all of you for hanging around this long. Maybe you're here because you don’t know there's a bar on the first floor of this hotel; I think that is where the main track is currently taking place.

00:00:23.600

I am Austin Putman, the VP of Engineering for Omada Health. At Omada, we support people at risk of chronic diseases like diabetes, helping them make crucial behavior changes for a longer and healthier life. It’s pretty awesome! I'm going to start with some spoilers because I want you to have an amazing RailsConf. So, if this is not what you're looking for, don’t be shy about finding that bar.

00:00:41.840

We’re going to spend some quality time discussing Capybara and Cucumber, whose flakiness is legendary for very good reasons. Let me take your temperature: can I see hands—how many folks have had problems with random failures in Cucumber or Capybara? Yeah, this is reality, folks. We're also going to cover the ways that RSpec does and does not help us track down test pollution.

00:01:09.520

How many folks out there have had a random failure problem in the RSpec suite, like in your models or your controller tests? Okay, still a lot of people, right? It happens, but we don't talk about it. In between, we’re going to review some problems that can dog any test suite. This includes random data, time zones, and external dependencies—all of this leads to pain.

00:01:43.119

There was a great talk before about external dependencies. Here’s just a random question: how many people here have had a test fail due to a daylight savings time issue? Yeah—Ben Franklin, you are a menace! Let’s talk about eliminating inconsistent failures in your tests. On our team, we call that fighting randos. I'm here to talk about this because I was short-sighted, and random failures caused us a lot of pain.

00:02:09.360

I chose to try to hit deadlines instead of focusing on build quality, and our team paid a terrible price. Is anybody out there paying that price? Does anyone else feel me on this? Yeah, it sucks! So let’s do some science. Some problems seem to have more random failure occurrences than others. I want to gather some data, so first, if you write tests on a regular basis, raise your hand. Wow, I love RailsConf! Keep your hand up if you believe you have experienced a random test failure.

00:02:50.159

Now, if you think you're likely to have one in the next four weeks, keep your hand up. Who's out there—it's still happening, right? You're in the middle of it. Okay, so this is not hypothetical for this audience; this is a widespread problem. But I don't see a lot of people talking about it. The truth is, while being a great tool, a comprehensive integration suite is like a breeding ground for baffling Heisenbugs.

00:03:14.560

To understand how test failures become a chronic productivity blocker, I want to talk a little bit about testing culture. Why is this even a problem? We have an automated CI machine that runs our full test suite every time a commit is pushed, and every time the build passes, we push the new code to a staging environment for acceptance. How many people out there have a setup that's kind of like that? Okay, awesome! So a lot of people know what I’m talking about.

00:03:38.239

In the fall of 2012, we started seeing occasional unreproducible failures of the test suite in Jenkins while we were pushing to get features out the door for January 1st. We found that we could just rerun the build and the failure would go away. We got pretty good at spotting the two or three tests where this happened, so we would check the output of a failed build, and if it was one of the suspect tests, we would just run the build again—not a problem. Staging would deploy, and we would continue our march toward the launch.

00:04:02.959

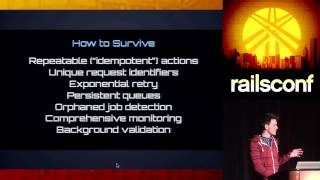

However, by the time spring rolled around, there were around seven or eight places causing problems regularly. We would try to fix them; we wouldn’t ignore them, but the failures were unreliable, so it was hard to say if we had actually fixed anything. Eventually, we just added a gem called Cucumber Rerun. This gem re-runs the failed specs if there’s a problem, and when they pass the second time, it’s good—you’re fine, no big deal.

00:04:43.840

Then some people on our team got ambitious and thought we could make CI faster with the parallel test gem, which is awesome. But Cucumber Rerun and parallel tests are not compatible. So, we had a test suite that ran three times faster but failed twice as often. As we came into the fall, we had our first bad Jenkins week. On a fateful Tuesday at 4 PM, the build just stopped passing, and there were anywhere from 30 to 70 failures. Some of them were our usual suspects, but dozens of them were previously good tests that we trusted.

00:05:21.600

None of them failed in isolation, right? After like two days of working on this, we eventually got to clean our RSpec build, but Cucumber would still fail, and the failures could not be reproduced on a dev machine or even on the same CI machine outside of the whole build running. Then over the weekend, somebody pushed a commit, and we got a green build; there was nothing special about this commit—it was like a comment change. We had tried a million things, and no single change obviously led to the passing build.

00:06:07.360

The next week, we were back to a 15% failure rate—pretty good. So we could push stories to staging again. We were still under the deadline pressure, so we shrugged it off and moved on. Then maybe somebody wants to guess what happened next? Yeah, it happened again—right? A whole week of no tests passing. The build never passes, so we turn off parallel tests because we can’t even get a coherent log of which tests are causing errors.

00:06:39.480

We then started commenting out the really problematic tests. Yet there were still these seemingly innocuous specs that failed regularly but not consistently. These are tests that have enough business value that we were very reluctant to just delete them. So, we reinstated Cucumber Rerun and its buddy RSpec Rerun. This mostly worked; we were making progress.

00:07:06.319

However, the build issues continued to show up in the negative column in our retrospectives, which was due to several problems with this situation: reduced trust. When build failures happen four or five times a day, those aren’t a red flag; those are just how things go, and everyone on the team knows that the most likely explanation is a random failure. The default response to a build failure became 'run it again.'