00:00:25.519

How's everyone feeling? Pretty good? Still energetic in the afternoon? Not too sleepy from lunch, I'm hoping.

00:00:31.160

So, my name is Jerry. I work as a senior engineer at Intridia. We do mostly Rails consulting.

00:00:37.079

I've been working there for a couple of years now and have been doing Rails and Ruby for the last five years. It's been a few years now, but definitely not as long as some of the veterans out here.

00:00:50.680

It's really humbling to talk to some of these really awesome, smart people in the hallways and get their perspective and experience on everything.

00:01:01.879

Anyways, my talk is about Evented Ruby and how it compares with Node.js. I wanted to give some background about these topics and also share some advice on how you can bring some of the performance benefits from Node into your existing Rails app.

00:01:21.159

We know everyone here loves Ruby, and I want Ruby to be the core of this talk.

00:01:32.600

As far as performance optimizations go, I'm a big fan of being as lazy as possible. If my users are happy, then obviously I'm going to be happy with response times.

00:01:46.119

Too many times I see people go after premature optimizations or micro-optimizations that give some benefit in production, but not enough to really justify the work or the level of effort.

00:02:06.560

Given the choice, I'd much rather write new features or learn new technologies than chase after these optimizations. That said, the web is becoming more complex and more real-time, so we do need to keep our apps running fast to avoid driving away potential users.

00:02:21.200

When I do have to look for optimizations, I want to look for things that are easy to implement, easy to maintain, and give me the most bang for my buck. Fortunately, a lot of the changes I'm discussing today are reasonably straightforward and minimize code changes.

00:02:47.760

In some instances, you don't have to change any of your server infrastructure at all. An overview of the topics I will cover includes some background on what evented programming is, why that is important for server-side coding, particularly web backends.

00:03:09.440

Then, I'll talk about Evented Ruby and the pros and cons compared to Evented JavaScript, and how these two languages differ in their approaches to concurrency.

00:03:38.240

Finally, I will go through a longer example of how to add Evented Ruby to a Rails app because while it might be straightforward to write an Evented Ruby script or utility, it's a little more involved to integrate the evented paradigm into a procedural Rails ecosystem.

00:04:01.000

So, what is evented programming? All it is is registering function callbacks for events that we care about. We do this every day in client-side programming. For example, a small snippet could look like this: let me know when someone clicks on the body element. When that event triggers, we want to turn the background color red.

00:04:21.239

That's very straightforward with no surprises. This little snippet illustrates the reactor pattern, which states that you have a system called a reactor that listens for events and then delivers those events to the subscribed callbacks.

00:04:33.440

In our example, the reactor is the browser, and the types of events it knows about are DOM events. Certain problems are naturally suited to the reactor pattern. For instance, when talking about user input, such as keyboard events, mouse events, or touch events on tablets, it makes sense to register these callbacks since there's no way to anticipate when these events will occur.

00:05:11.280

It's also beneficial that we have these reusable reactors—pre-built systems—so that every time we write something in JavaScript, we don't need to write mouse detection code from scratch. Not all events have to be user-initiated or physical, though; you can define an event to be anything you want.

00:05:39.840

For example, an event could be when the network comes up or goes down, or when data is ready from a SQL query. According to the Node.js website, it uses an event-driven, non-blocking I/O model. We are now hearing some of these terms, but what does it mean to have non-blocking I/O, and why is that important?

00:06:29.360

I came across this great anecdote from Christian Parisi: If RAM was an F-18 Hornet with a maximum speed of 1,190 mph, disk access would be like a banana slug, with a top speed of 0.007 mph. Most of us understand this intuitively.

00:06:49.760

More specifically, blocking I/O occurs when slower devices can't deliver data to the CPU quickly enough, which causes the CPU to wait for those slower devices to have something to process. We know that CPUs are faster than memory, memory is faster than disk, and disk is faster than network access. The longer we have to wait, the more we idle the CPU.

00:07:29.600

The nice thing is that our operating system works with hardware caches to hide this from us. In Ruby, when we call file.read(file_path), that appears as a single operation, even though behind the scenes, the CPU isn't doing much work—it just starts the operation and has to wait a long time for the disk to return any data.

00:07:54.800

Another advantage provided by the operating system is that when it notices the CPU isn't doing anything, it can switch to other processes. For instance, the fact that we're running a SQL query in Rails doesn't mean that our music is going to stop playing or that our browser is going to stop working.

00:08:29.760

What Node.js means when it says it has event-driven, non-blocking I/O is that it builds on the concurrency provided by the operating system and takes it a step further by managing I/O at the application layer.

00:08:52.160

When starting a Node.js process, you get a reactor, and with that reactor, you can register for when you're about to perform a slow, blocking I/O operation. Because registering an event takes no time, Node can continue doing other work within its own process without having to switch to other processes.

00:09:48.680

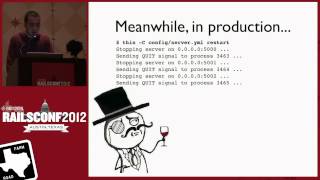

Now that we've discussed evented programming in a vague general sense, you might be wondering: if the operating system already handles blocking I/O slowness for us, why should we care about evented I/O for server-side programming? The reason is that even though the OS can switch between processes, we see a lot of waste in each of the individual Rails processes that we start.

00:10:56.679

Inside all of our apps, we typically do a lot of database access and file system access, which touches the disk. If we access external APIs, such as when storing something in S3, that requires network communication. Moreover, if we use an external program like ImageMagick, the act of shelling out to another command and reading the output from it is also blocking I/O.

00:11:52.960

To make this more concrete, consider writing a controller action that creates a new tweet and saves it to our database. In just a few lines, we've shown a significant amount of blocking I/O.

00:12:02.400

When we shorten links, we are making a network access call, and when we save the tweet to the database, that touches the disk. While all this happens transparently for us—which is fantastic—the CPU’s perspective sees us doing a bit of processing while waiting a long time for each request.

00:12:37.800

The first request must finish before the second request can be processed. To manage this, we often start multiple Rails processes to handle concurrent users. If you're using Passenger or Unicorn with a set number of workers, each Rails process handles one request.

00:13:07.400

This approach works fine, but the downside is that we consume a lot of memory running many Rails processes at once. Furthermore, for the amount of memory consumed, each of these processes can handle only one request at a time.

00:14:07.600

If we write the same code using Node.js, it might look something like this: instead of having each line run sequentially, whenever we're about to perform blocking I/O, we can register a callback. So, we don't wait for Bitly to return our response; we say, "When you're done shortening the URL, call this callback."

00:14:36.800

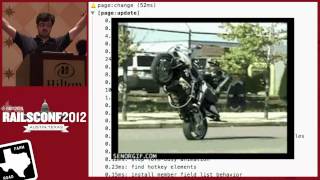

The main difference is that while handling the first request, we do a little processing, and because all we're doing is registering callbacks, this action completes almost immediately, allowing us to return to the reactor. Meanwhile, we also start the blocking I/O operation, and while that operation is happening, the reactor can manage new incoming requests.

00:15:32.040

Even though it appears that we handle multiple requests with one Node process, it's essential to note that at any given time, only one operation runs. The primary benefit is that during the blocking I/O sections, we can initiate new requests.

00:16:29.120

If we depict this same scenario using Node, each process can handle multiple requests, allowing us to reduce the number of Node processes required to manage the same workload.

00:16:51.679

Additionally, if one Node process is busy, we can start another process, benefiting from operating system process concurrency. The overall outcome is that we require fewer processes, resulting in less memory usage and lowering costs.

00:17:25.920

An important distinction to note is the difference between latency and concurrency. Even though the Ruby version can only handle one request at a time, if there is just one request, both versions will take the same time.

00:17:50.960

Using evented I/O does not magically make disk operations faster. If your requests are inherently slow, I recommend optimizing response latency first because that’s what your users will notice.

00:18:30.960

While the Node version can handle more concurrent requests, there is a trade-off for this improvement. If we look at our application code, we become cognizant of blocking I/O.

00:19:10.600

Previously, we could just shorten links, and then the next line executed as if it was done. In this way, we are exposing the fact that both shortening links and saving involve blocking operations, which isn't something we need to concern ourselves with in our domain.

00:19:48.320

Being a Ruby enthusiast, we appreciate the benefits of writing code that is easy to read and understand. When we start nesting callbacks, we end up with what could be described as callback spaghetti.

00:20:49.560

So, we see that Node gives us better concurrency, but can we achieve similar benefits with Evented Ruby? And if we do, what are the trade-offs? The good news is that Ruby supports evented programming.

00:21:06.400

By default, we tend to use Ruby procedurally, but its multi-paradigm nature allows us to operate in various styles. You could use a library to employ it as a reactor or even leverage threads for parallel computing.

00:21:51.520

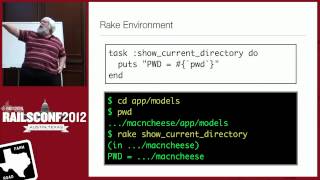

The challenge is that within the same codebase, you can mix and match paradigms. The first step towards evented I/O in Ruby is to add a reactor. One of the most popular libraries for that is EventMachine.

00:22:11.359

Because there's no assumption that you're going to run evented code, you have to explicitly start the reactor yourself. In Node.js, typing 'node' runs your script in a reactor, while in Ruby you need to call 'EventMachine.run' to start the reactor loop.

00:22:48.200

Fortunately, many application servers we use already have a reactor built in. For example, using Thin means you already have an EventMachine reactor running. Unicorn has a similar model with Rainbows that integrates an EventMachine reactor.

00:23:23.679

If you're using Passenger, it’s a bit more complicated, but you can still run your code in a reactor with some modifications.

00:23:44.360

Now that we have a reactor, we still need to handle events and callbacks when these events occur. This code example demonstrates how to use a reactor-aware HTTP library to fetch a web page.

00:24:19.760

The EventMachine library includes an HTTP client. You can create a request object with a given URL and specify a callback when the request is completed. This is the registration phase where we register for that event.

00:24:56.720

During execution, even if railconf2012.com takes forever to load, we've registered the callback and can continue with other tasks because the reactor is free to handle additional tasks in the meantime.

00:25:31.280

However, writing code this way introduces the same issues we discussed earlier. We manipulate the scheduling of I/O operations on our own, and it might not feel like idiomatic Ruby.

00:25:54.000

Instead of saying, 'Fetch me a web page,' the code evolves into an advanced and unconventional form. When using libraries like Faraday, we can execute a straightforward command to meet our requirements.

00:26:22.479

Moreover, with a small adjustment, we can configure Faraday to operate within a reactor. This way, we still use Faraday as expected, without altering its interface—everything continues to work, but all I/O now runs in an evented manner.

00:26:47.679

The bottom line is that we can keep our application code clean while hiding callbacks within libraries. This is achieved by using Ruby's Fiber objects, which provide cooperative concurrency.

00:27:38.160

Fibers allow us to create blocks that can pause and resume, like threads. When we initiate an HTTP request, we start a new fiber, which lets the reactor continue running while blocking on I/O operations.

00:28:18.000

Unfortunately, managing the scheduling of fibers adds complexity. The virtual machine never halts a fiber blocking the reactor; you must tell it when to pause and resume, thus introducing additional control over the complexity.

00:29:06.880

Each domain model has its logic. In the tweet domain—as an example—the logic should aim to hide the complexities of shortened links and database interactions, allowing our application logic to remain clean and focused on business needs.

00:29:49.040

It's reasonable for a database adapter to manage its own I/O operations since that pertains to its specific domain. As an app developer, you should be able to utilize that database adapter seamlessly, unaware of the underlying operations.

00:30:18.960

However, there are cases where we need to focus on the event itself. For instance, when registering for publish events in Redis, we want visibility into the event details rather than hiding them completely.

00:30:50.800

While we have our reactor integrated with the app server, we also need the requests running in individual fibers. Luckily, web requests are independent of one another, making it sensible to wrap each in its own fiber.

00:31:36.239

As a result, this allows the reactor to switch between concurrent requests in the same process. Thanks to Rails being built on top of Rack, we can utilize the Rack Fiber Pool gem to wrap each incoming request in a separate fiber.

00:32:11.520

This implementation offers a similar model for other Ruby web frameworks like Sinatra or Grape. While we've made some adjustments, there are minimal infrastructure changes; you might continue using your existing app server, which might already be reactor-aware.

00:33:06.360

The only code adjustment required is ensuring every request runs in its own fiber, which is a straightforward change. Benchmarking this updated application, however, will yield results that still underperform as before.

00:34:49.679

None of your current code is aware it operates within a reactor. Active Record, for example, doesn't yield to the reactor when making calls, meaning that while you've incorporated fibers, blocking remains your primary issue.

00:35:58.480

While these modifications might not yield immediate gains, they still provide a foundation for future improvements. As we work through making our code reactor-aware, we will see performance benefits.

00:36:27.159

To get started, focus on libraries and components that commonly use blocking I/O. From your data stores to API calls and external commands, these are the places you will find opportunities for improvement.

00:37:04.239

Some database adapters might require a simple configuration change to support running within a reactor. Often, you can leverage gems like EM Synchronized to patch existing adapters, allowing for reactor-aware implementations.

00:38:07.679

Faraday is a recommended HTTP client to use as it can toggle between different adapters, normalizing the interface to provide consistency. The best part of Evented Ruby is that you maintain the readability of your interface while gaining concurrency.

00:39:05.840

For system calls, EventMachine offers functions similar to those found in the kernel module, such as event_machine.popen, which operates non-blocking. If you're in a situation where synchronous code is unavoidable, you can apply em.defer to execute non-blocking operations in a separate thread.

00:39:26.359

By making your libraries reactor-aware, you will likely notice improvements resulting in higher performance, similar to the Node.js model, where the reactor handles new requests while other threads are occupied.

00:39:41.470

In conclusion, implementing evented programming is challenging, yet incredibly valuable. It requires effort to ensure your application code remains clean, but the payoff is significant and can lead to a more efficient app.

00:40:00.000

Before diving headfirst into evented programming, address response latency first. Even minor adjustments can allow your existing app servers to handle additional requests swiftly.

00:40:12.000

The key takeaway is that evented I/O interfaces shouldn't cloud your domain logic; such callbacks should remain in I/O-related libraries.