00:00:20.660

Okay, so I started talking about the just-in-time compiler for Ruby. Since this is my first visit to RailsConf, let me introduce myself.

00:00:30.300

My name is Takashi Kokubun, and I am working at Rosetta. I've been a Ruby committer for about two to three years.

00:00:37.140

My main focus is on the Ruby on Rails application development. I began contributing as a Ruby maintainer and have been actively working on the JIT compiler.

00:00:46.379

This work is a side project for me, as my primary responsibility is within the business of Georgia Data.

00:00:54.680

After work, I spend my time working on the JIT compiler, so this talk is all about my side work on JIT.

00:01:07.050

JIT stands for just-in-time, and it is currently an experimental feature for Ruby. This is all about MRI, which is the reference implementation of Ruby.

00:01:19.020

By having this data sheet, we can optionally enable this experimental feature to optimize Ruby itself. Let me explain what is going on behind it.

00:01:31.470

The process starts with Ruby generating a header to be consumed by the JIT compiler. Initially, it works as a C code generator, translating Ruby code to C language.

00:01:52.349

However, merely translating the language itself does not improve performance. When we generate the C code, we need to optimize a variety of aspects.

00:02:06.320

These aspects include inlining instructions, managing variables in cache, and handling method calls. Such optimizations improve performance, rather than simply translating Ruby to C.

00:02:25.440

For simulating Ruby features, we need to call functions within the Ruby interpreter. Thus, translating to C alone won't enhance performance.

00:02:37.240

I will talk about the details of how we can improve this process.

00:02:44.980

After generating C code, it is compiled by a C compiler like GCC, Clang, or Visual Studio. This compiled code can then be linked to a shared library.

00:03:18.370

Because the shared library can be dynamically loaded by the Ruby interpreter using functions like dlopen, many methods generate a lot of data files in a temporary directory.

00:03:39.430

These files can be used for future linking. We link many .so files into one .a file because having many fragmented functions in memory increases overhead.

00:03:54.160

We do this linking in order to reduce overhead on instruction cache.

00:04:09.310

Currently, with Ruby 2.6, I haven't received any segmentation faults because we are running continuous integration for JIT compilers 24 hours a day.

00:04:35.979

It randomly generates segmentation faults, which I have been fixing myself. Now it is somewhat stable.

00:04:55.180

Nonetheless, stability has no meaning if it doesn't improve performance. Let me show the current state of performance for the JIT compiler.

00:05:09.100

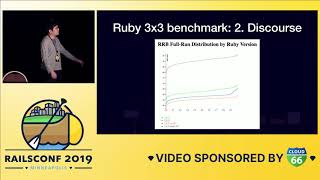

The developer teams are creating benchmarks to improve Ruby performance. A popular benchmark is the Ruby 3x3 benchmark, which means Ruby 3 should be three times faster than Ruby 2.0.

00:05:38.530

Currently, after four months of comparing Ruby 2.6 with and without the JIT, it finds that the JIT compiles Ruby to 1.6 times faster. Between Ruby 2.0 and 2.6, the performance is 2.5 times faster.

00:06:05.140

So nearing three times faster is quite feasible with this benchmark. Memory consumption, however, may be a trade-off with speed.

00:06:23.950

In this case, memory consumption appears low because the JIT compiler is a separate process like GCC.

00:06:49.310

The generated code does not consume significant memory either, making its performance promising for Rails as well.

00:07:20.150

However, we're not running any simulators in production; the benchmarks have been conducted on a lighthearted project rather than an actual web application.

00:07:56.290

For real-world solid programs, we need measurements taken in a Rails application. Another famous benchmark for Ruby is the Discourse application.

00:08:24.130

The Discourse application is used by the Ruby community and has been rewritten into a benchmark for Rails performance.

00:08:53.320

Many reports detail performance improvements by measuring Discourse with the JIT compiler. A graph provided by the developer shows that runtime is lower for optimized runs.

00:09:27.030

With Ruby 2.6's performance, memory consumption may seem bad. Nevertheless, I do not wish to focus solely on this presently.

00:09:49.460

The measuring of performance at 10,000 requests does not necessarily reflect actual performance. A real-world application with at least 10 minutes of runtime offers a broader view.

00:10:10.550

Because JIT processing uses the same compiler, if you are currently using Ruby, Puma, or Rails with high CPU utilization, performance degrades.

00:10:33.060

Thus, I am focusing on improving performance after benchmarking has concluded. With all comparisons completed, we aim to ensure that our efforts result in stable improvement.

00:10:48.320

This graph shows throughput. Higher amounts of throughput means better performance.

00:11:02.060

Currently, JIT performance in Ruby 2.6 has been judged less favorable. I am actively working to enhance this situation.

00:11:10.750

My goal is to improve Ruby's performance outcomes significantly, although for now, the Ruby 2.7 JIT shows slight improvement but not enough to exceed the non-JIT performance.

00:11:30.950

The key challenge involves overhead from calling or loading numerous native codes dynamically. I am actively investigating causes for this performance degradation.

00:11:48.010

This presentation details why the JIT is not faster yet and what measures we are taking to improve it. To understand these performance dynamics, we must profile real-world applications and their specific problems.

00:12:23.660

Profiling can be complex, as it impacts various functionalities and logic. It often diverges from core performance concerns, with the Discourse application measurement shedding little light.

00:12:39.970

Currently, I work on improving several aspects of the real-world Rails scaffold usage. This is a crucial area as our real benchmarking should depict realistic usage behavior.

00:13:08.890

In reviewing Rails performance with the Vitamin benchmarking tool, results indicate that while the performance of Ruby 2.6 was slow, there is no significant speed increase with Ruby 2.7 as of yet.

00:13:43.050

However, memory consumption remains steady, with no drastic increase.

00:14:00.260

Further optimization efforts can be expected. Our goal is building compiler-distributed environments, allowing Ruby's JIT performance to improve over time.

00:14:26.420

To conclude, I'm currently focusing on improving Rails performance by continuously measuring through exact benchmarks.

00:14:40.690

The continuous assessment of compiled performance will be crucial moving forward.

00:15:04.370

I have one minute for questions. Feel free to ask.

00:15:09.239

If your question concerns performance outside of allocation, I can share my current analysis.

00:15:22.369

I have observed that close to 15% of time is consumed at the VM level, while about 9% is spent on object allocation or memory management.

00:15:43.680

An additional 6 to 7% can be attributed to regular expression handling. I currently haven't improved the expressions for performance.

00:15:58.630

Essentially, overhead in method calls and optimizations represents an essential area to address in upcoming proceedings.

00:16:05.889

I am open to further questions or discussions after.

00:16:16.919

Thank you!